A financial institution is breached. Somewhere in a stack of 10,000 alerts generated overnight, the signal exists. The SIEM flagged it. The XDR flagged it. No one saw it, because no one can see 10,000 alerts and reason across them simultaneously while the tools that generated them cannot reason at all.

This is not a staffing problem. It is an architecture problem.

The Security Operations Center was designed around the human analyst as the reasoning unit and the SIEM as the data aggregator. That model is collapsing under its own weight. According to CardinalOps’ 2025 Annual Report, enterprise SIEMs cover only 21% of MITRE ATT&CK techniques despite ingesting data that could theoretically cover 90%. The SANS 2025 SOC Survey found that 66% of teams cannot keep pace with alert volumes, and the Verizon 2024 DBIR noted that 74% of breaches had alerts that were generated but ignored. The analysts exist. The data exists. The gap is reasoning capacity.

Generative AI has been proposed as the fix but adding a single large language model (LLM) to a SIEM simply relocates the bottleneck. A monolithic LLM asked to analyse a complex incident across network telemetry, vulnerability context, user behaviour, and regulatory obligations simultaneously will hallucinate, truncate, or generalise. A 2025 study demonstrated that GPT-class models failed to flag any of 100 fabricated CVE-IDs as invalid, generating plausible-looking advisories for nonexistent vulnerabilities. A single model doing everything does nothing well. The answer is not a smarter tool. It is a smarter team.

The Specialist Model

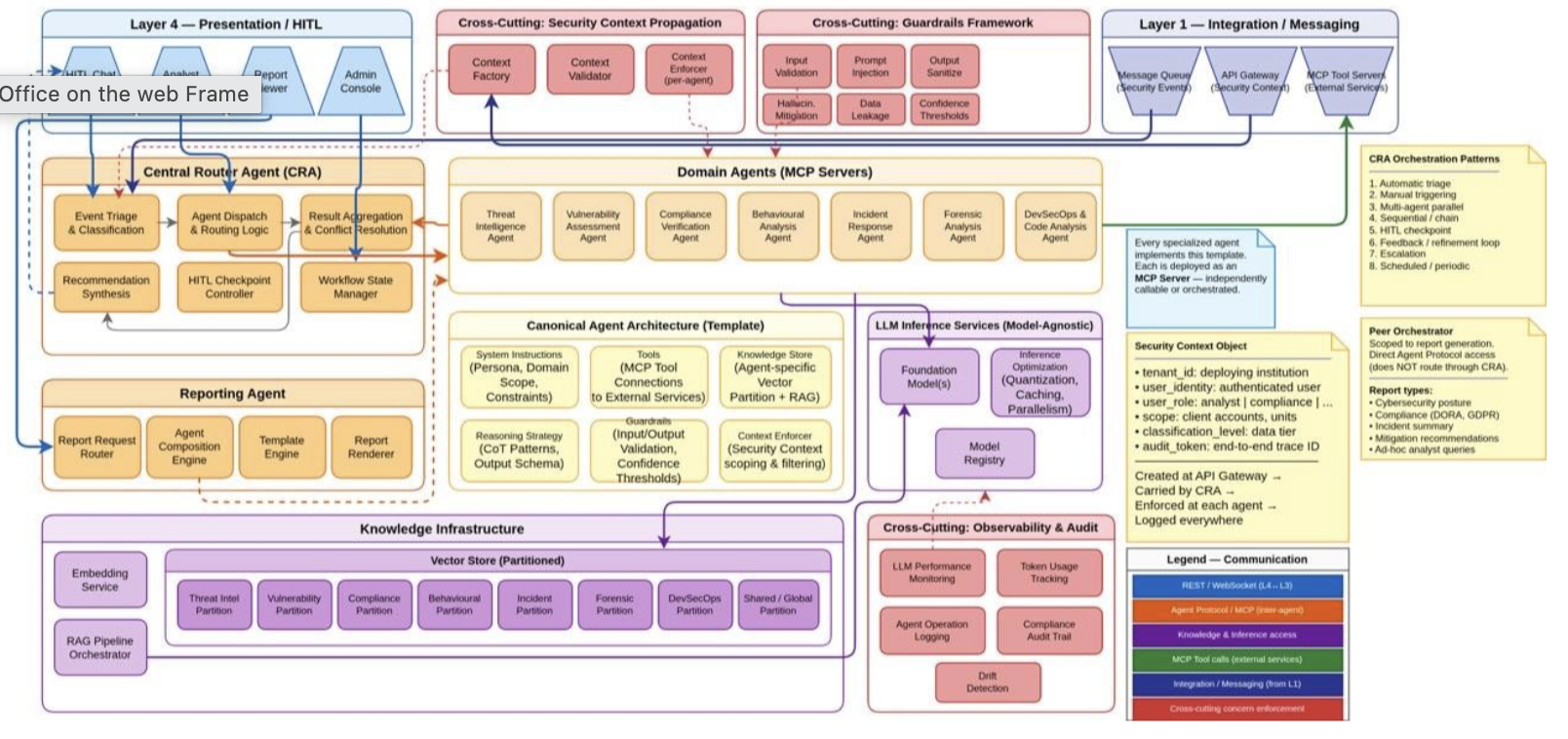

CyberAId’s LLM Orchestration Layer is built around a core insight borrowed from how expert security teams operate: no single analyst is simultaneously a threat intelligence expert, a forensic investigator, a compliance officer, a vulnerability researcher, and a code auditor. Expertise is domain-specific, and effective coordination across domains is what produces sound security decisions.

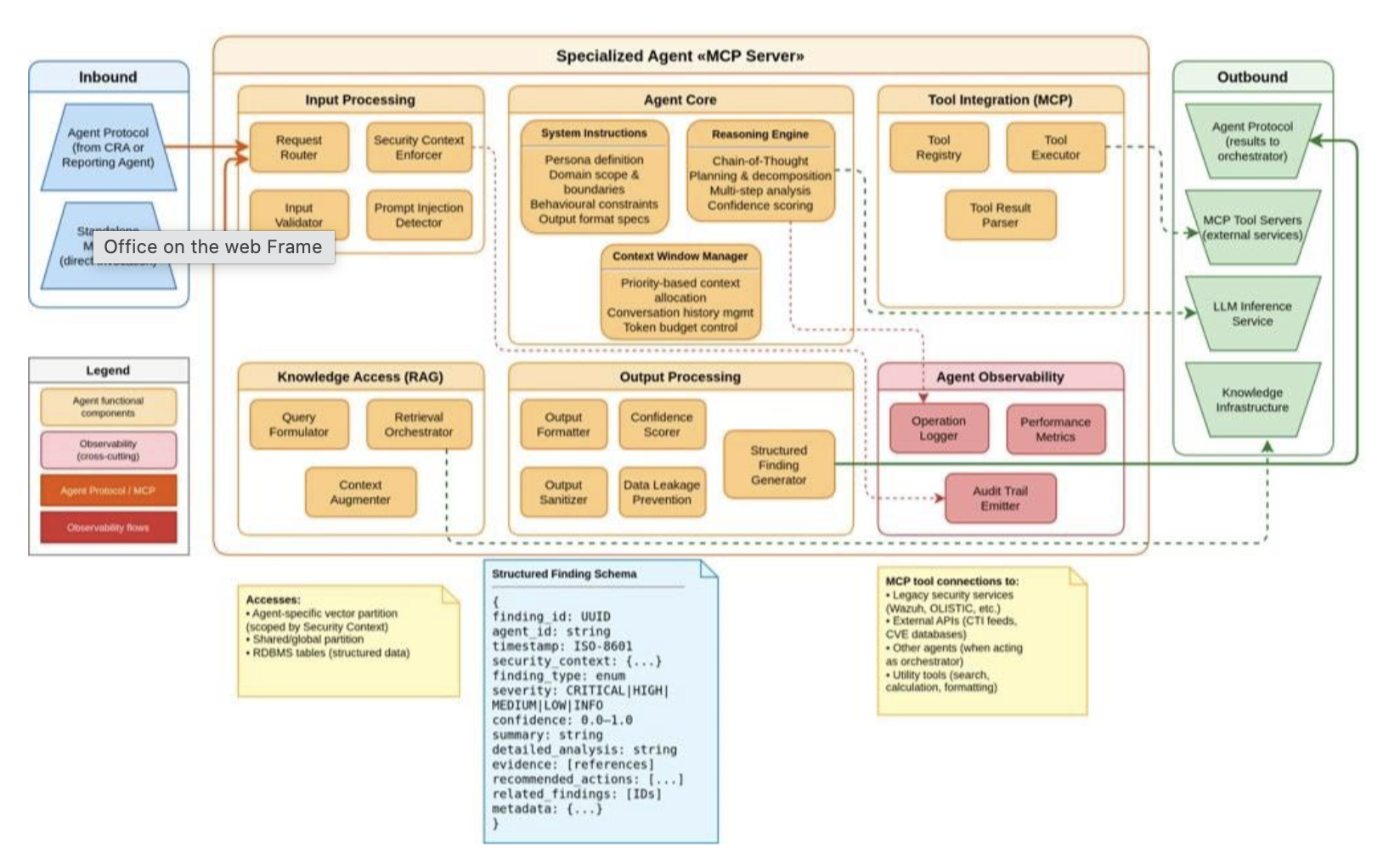

CyberAId implements this as seven specialised agents, each deployed as an independent MCP Server with its own system instructions, domain-specific knowledge partition, and toolset:

- Threat Intelligence Agent — tracks indicators of compromise, maps adversary TTPs against MITRE ATT&CK, correlates campaigns across CTI feeds (STIX/TAXII, ISAC sharing), and attributes threats to known actors.

- Vulnerability Assessment Agent — scores exploitability and impact using CVE/NVD data, cross-references patch availability, and prioritises remediation against the institution’s actual exposure profile.

- Compliance Verification Agent — maintains awareness of DORA, GDPR, PCI DSS v4.0, and NIS2 obligations, mapping findings to specific regulatory requirements and generating audit-ready documentation.

- Behavioural Analysis Agent — runs UEBA logic against established baselines, identifies insider threats, and flags deviations that signature-based detection cannot surface.

- Incident Response Agent — executes structured containment playbooks, coordinates cross-system isolation actions, and manages recovery sequencing with full state tracking.

- Forensic Analysis Agent — reconstructs event timelines from heterogeneous log sources, preserves evidence chains, and produces findings in a format that meets legal and regulatory standards for digital forensics.

- DevSecOps & Code Analysis Agent — performs static and dynamic code analysis, identifies introduced vulnerabilities, and validates SDLC compliance — connecting the development pipeline to the operational security posture.

Each agent is independently callable. Each produces output in a standardised Finding Schema that carries severity, a calibrated confidence score, evidence references, and recommended actions. This makes findings composable: the Central Router Agent (CRA) aggregates them across domains, resolves conflicts, and produces unified recommendations. Critically, the Reporting Agent can query domain agents directly without routing through the CRA, so compliance reporting and incident response can run concurrently without contention.

Beyond Rules: How Agents Reason

The gap between a SIEM correlation rule and an agent is the gap between pattern matching and analysis. A SIEM fires when a threshold is crossed. An agent reasons about why the threshold was crossed, what context surrounds it, what the adversary’s likely objective is, and what the appropriate response is drawing on retrieved knowledge from MITRE ATT&CK, live CTI feeds, and institution-specific operational history.

This is grounded reasoning, not generation. Each agent operates a retrieval-augmented pipeline against a partitioned vector store. The Threat Intelligence Agent queries its own partition; the Compliance Agent queries a different one. Retrieval is scoped by Security Context, a structured object carrying tenant identity, user role, data classification level, and audit token, created at the API Gateway and enforced within each agent. Client A’s threat intelligence never bleeds into Client B’s analysis. Research from PNNL demonstrated that graph-augmented retrieval across CVE→CWE→CAPEC→ATT&CK mappings enables agents to produce cross-framework correlations that LLMs without grounding structurally cannot.

Tool Integration: MCP and CLI

Agents interact with the security infrastructure through MCP Tool Servers — a layer that wraps existing services (SIEM/XDR, vulnerability scanners, risk assessment engines, CTI platforms) behind a standardised, auditable interface. MCP converts the integration problem from N×M to N+M, enables runtime tool discovery, and provides the access control and audit logging that regulated environments require.

Where MCP overhead is a concern (i.e, high-frequency telemetry processing, deterministic forensic queries, time-critical containment operations) agents invoke security tooling directly via CLI interfaces. The pattern of wrapping tools like osquery, Nmap, or EDR CLIs as callable agent functions is well-established in production deployments and reduces latency without sacrificing the orchestration layer’s auditability. The architecture is pragmatic: MCP for flexible reasoning across services, direct invocation for throughput-sensitive operations.

Trust Is Architectural, Not Optional

Financial institutions operating under DORA face a 4-hour initial incident notification window. The EU AI Act classifies AI systems used in financial risk assessment as high-risk, triggering mandatory human oversight requirements under Article 14. These are not compatible with either a fully autonomous AI or a purely human SOC. They require bounded autonomy.

CyberAId’s HITL architecture implements three tiers: routine reversible actions (known-indicator blocking, alert triage) run autonomously; the agent evaluates ambiguous situations against confidence thresholds and escalates if uncertain; high-severity actions (network segmentation changes, major incident declarations) require explicit analyst approval. Every decision (automated or human) is recorded with full provenance: what data was used, what reasoning was applied, which tools were called, and what the confidence score was at decision time.

Guardrails operate at every agent boundary: prompt injection detection on input, output sanitisation and data leakage prevention before response. The vector stores that ground each agent are themselves security-sensitive partitioned, encrypted, and monitored for integrity, given demonstrated research that knowledge base poisoning attacks can achieve over 90% success rates against unprotected RAG systems.

What This Changes

The dominant approach to AI in security operations today is copilot integration: a single LLM added to an existing SIEM, assisting analysts with natural language queries. This is a useful productivity improvement that does not change the fundamental model. It still depends on analysts reviewing alerts at scale; it still lacks cross-domain synthesis; it still cannot produce the continuous, evidence-backed, compliance-mapped analysis that DORA and the EU AI Act increasingly require.

CyberAId’s multi-agent architecture is a different proposal: not a smarter tool inside an existing workflow, but a different workflow where domain expertise is encoded in specialised agents, coordination is explicit and auditable, knowledge is grounded in authoritative sources, and human oversight is structural rather than procedural. The seven specialists do not replace the security team. They change what the security team is asked to do.

References

- CardinalOps. 5th Annual State of SIEM Detection Risk Report (2025).

- SANS Institute. 2025 SOC Survey. https://www.sans.org/white-papers/sans-2025-soc-survey

- Srinivas, Kirk, Zendejas et al. AI-Augmented SOC: A Survey of LLMs and Agents for Security Automation (MDPI Journal of Cybersecurity and Privacy, November 2025). 40% reduction in false positives for insider threat detection. https://www.mdpi.com/2624-800X/5/4/95

- arXiv. Using LLMs for Security Advisory Investigations: How Far Are We? (2025). LLMs failed to flag fabricated CVE-IDs as invalid. https://arxiv.org/html/2506.13161v1

- arXiv. CyberRAG: An Agentic RAG Cyber Attack Classification and Reporting Tool (arXiv:2507.02424). 94.92% classification accuracy with domain-partitioned vector stores. https://arxiv.org/pdf/2507.02424

- Model Context Protocol. Security Best Practices (Official Specification). https://modelcontextprotocol.io/specification/draft/basic/security_best_practices

- Google Cloud. The Dawn of Agentic AI in Security Operations — RSAC 2025. https://cloud.google.com/blog/products/identity-security/the-dawn-of-agentic-ai-in-security-operations-at-rsac-2025